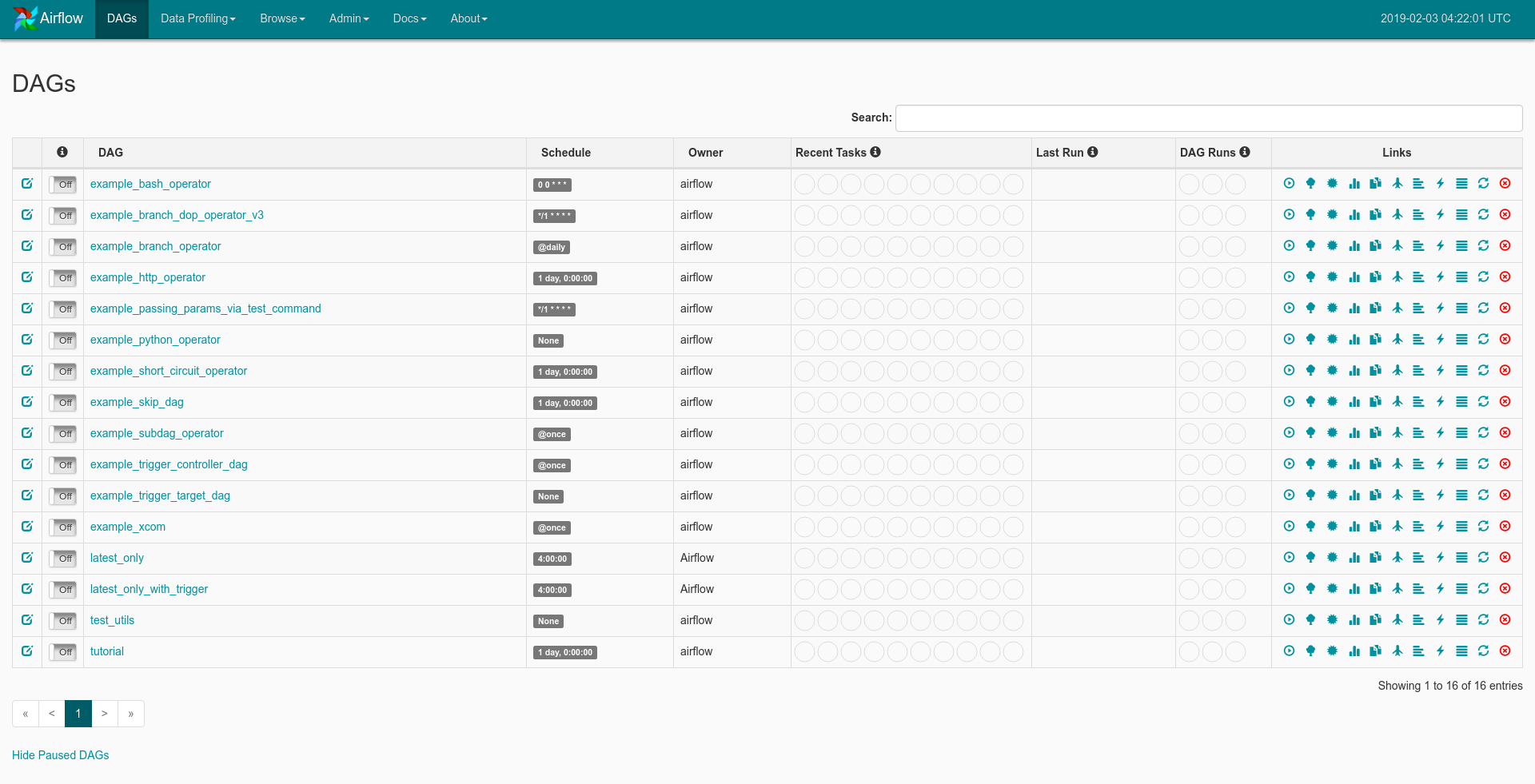

Place the following file inside the /dags directory. Creating a simple Airflow DAG to run an Airbyte Sync Job The Airbyte UI can be accessed at localhost:8000. This ID can be seen in the URL on the connection page in the Airbyte UI. We'll need the Airbyte Connection ID so our Airflow DAG knows which Airbyte Connection to trigger. Configure Airflow's HTTP connection accordingly - we've provided a screenshot example.ĭon't forget to click save! Retrieving the Airbyte Connection ID Airbyte is typically hosted at localhost:8001. The Airbyte API uses HTTP, so we'll need to create a HTTP Connection. The Airflow UI can be accessed at Airflow will use the Airbyte API to execute our actions. Once Airflow starts, navigate to Airflow's Connections page as seen below. Create a DAG in Apache Airflow to trigger your Airbyte job Create an Airbyte connection in Apache Airflow Additionally, you will need to install the apache-airflow-providers-airbyte package to use Airbyte Operator on Apache Airflow. If you don't have an Airflow instance, we recommend following this guide to set one up. Airflow will be responsible for manually triggering the Airbyte job. This tutorial will use the Connection set up in the basic tutorial.įor the purposes of this tutorial, set your Connection's sync frequency to manual. If this is your first time using Airbyte, we suggest going through our Basic Tutorial. (We'll be using the docker-compose command, so your install should contain docker-compose.) Start Airbyte Set up the tools įirst, make sure you have Docker installed. The Airbyte Provider documentation on Airflow project can be found here. SDAs are more powerful and mature than datasets and include support for things like partitioning.Due to some difficulties in setting up Airflow, we recommend first trying out the deployment using the local example here, as it contains accurate configuration required to get the Airbyte operator up and running. Triggering and configuring ad-hoc runs is easier in Dagster which allows them to be initiated through Dagit, the GraphQL API, or the CLI. I/O managers are more powerful than XComs and allow the passing large datasets between jobs. Multiple isolated code locations with different system and Python dependencies can exist within the same Dagster instance.ĭagster provides rich, searchable metadata and tagging support well beyond what’s offered by Airflow.ĭagster resources contain a superset of the functionality of hooks and have much stronger composition guarantees. For off-the-shelf functionality with third-party tools, Dagster provides integration libraries.

Airflow conceptĭagster uses normal Python functions instead of framework-specific operator classes. To ease the transition, we recommend using this cheatsheet to understand how Airflow concepts map to Dagster. While Airflow and Dagster have some significant differences, there are many concepts that overlap. This integration is designed to help support users who have existing Airflow usage and are looking to explore using Dagster. You want to trigger Dagster job runs from Airflow.You want to do a lift-and-shift migration of all your existing Airflow DAGs into Dagster Jobs/SDAs.The main scenarios for using the Dagster Airflow integration are: The dagster-airflow package provides interoperability between Dagster and Airflow. You can find the code for this example on Github

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed